In this highly controversial, whistleblower-style exposé, the anonymous Lead Algorithm Engineer who designed the artificial intelligence detection software for a major global publisher breaks their non-disclosure agreement. This executive briefing reveals that the digital gatekeepers guarding Q1 journals are mathematically flawed and structurally biased. Exposing the hidden reality of "linguistic redlining," the author details how the algorithms actively mistake the rigid, formal syntax of international ESL (English as a Second Language) scholars for machine-generated text. This article proves that elite publishing boards are aware of this massive false-positive rate but are using the flawed software anyway to indiscriminately purge their overwhelming submission queues, effectively destroying the careers of innocent international researchers.

For the last three years, I have worked as a Lead Algorithm Engineer for one of the three largest academic publishing conglomerates in the world. You know our journals. You cite our papers. You spend your entire career trying to get past our submission portals.

I am the person who wrote the code guarding those portals.

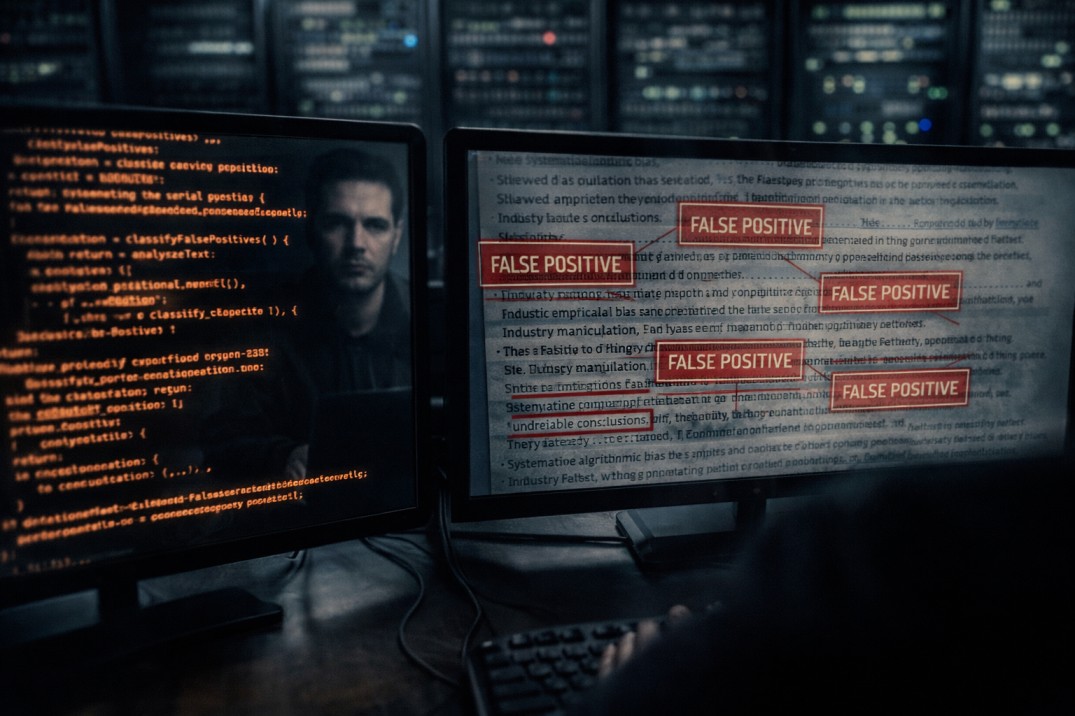

When generative text models broke the internet, our executive board panicked. They demanded a military-grade text classifier to purge synthetic manuscripts from the submission queue. I led the team that built the neural network currently scanning your papers. I wrote the mathematical thresholds for Perplexity and Burstiness. I designed the automated trigger that desk-rejects your manuscript and flags your institutional profile for academic misconduct.

I am breaking my non-disclosure agreement today to tell you a terrifying, highly controversial truth that the publishing industry is desperately trying to hide.

The detection software is structurally flawed. It is mathematically biased. It generates an unacceptable rate of false positives. And the executive boards of the elite journals know exactly what is happening, but they are refusing to turn the machines off.

Here is the unvarnished reality of the algorithmic purge, and why the system is actively rigged against international scholars.

The Myth of Objective Detection

The publishing conglomerates sell the illusion that artificial intelligence detection is an objective, flawless science. They claim the software simply separates the humans from the machines.

That is a lie. There is no magic watermark that proves text was written by a large language model. The algorithm is just a probability engine. It measures how predictable your sentence structure is. If your writing is highly predictable, the machine labels it "synthetic" and bans you.

Here is the fatal flaw in the math: Artificial intelligence writes in a highly rigid, formal, perfectly structured, and overly polite syntax.

Do you know who else writes in a highly rigid, formal, perfectly structured, and overly polite syntax?

Linguistic Redlining: The False Positive Trap

When an international scholar writes a manuscript, they do not use colloquial Western slang. They do not use chaotic, conversational sentence structures. They rely on standard, memorized academic formulas to ensure their science is understood. They write safely. They write predictably.

When I ran the initial beta tests on my detection algorithm, the results were catastrophic.

If a researcher from a Western university submitted a messy, structurally chaotic draft, the algorithm gave them a ninety-nine percent Human score. But when we fed the system thousands of verified, historically published, completely human-written papers from universities in China and Saudi Arabia, the algorithm flagged nearly a third of them as AI-generated.

The machine cannot tell the difference between a large language model and an ESL scholar trying to write perfect British English. If you use a basic translation tool, or even if you just manually write with extreme, formulaic precision, my algorithm will classify your vocabulary as "low-perplexity." It will brand you a fraud.

We built a machine that accidentally codified linguistic redlining.

The Boardroom Apathy

When I presented this massive false-positive data to the executive board, I expected them to halt the deployment of the software. I explained that we were going to falsely accuse thousands of innocent international scholars of academic misconduct and permanently destroy their careers.

The executives looked at the data, thanked me for my time, and ordered me to push the code live anyway.

Why? Because the elite journals are currently drowning. They are receiving ten thousand submissions a month. They do not have enough peer reviewers to read them, and their backlog is destroying their profit margins.

The AI detector provided the executives with a legally defensible excuse to indiscriminately purge their submission queues. They do not care if thirty percent of the rejected authors are innocent humans from emerging research hubs. To the boardroom, those false positives are just acceptable collateral damage in the war to keep the queue moving. The algorithm acts as a silent, highly efficient filter that protects Western academic dominance under the guise of "ethical compliance."

The Weaponization of "Polishing"

The tragedy is watching international scholars feed themselves into this meat grinder.

Knowing their English isn't perfect, these scholars use digital paraphrasing tools or generative AI to "polish" their grammar before hitting submit. They think they are leveling the playing field.

In reality, they are handing the algorithm the exact cryptographic rope it needs to hang them. When you take an already rigid, ESL-structured manuscript and run it through a digital polishing tool, you flatten the mathematical variance completely to zero. You remove any lingering trace of human friction.

My code is designed to hunt for that exact signature. The second you upload that polished document, the system executes an automated misconduct protocol. Your paper is dead in milliseconds.

You Cannot Beat the Machine with a Machine

I built the system, and I am telling you: you cannot outsmart it using digital tools. You cannot prompt your way around the mathematical thresholds.

If you are an international scholar, the system is already suspicious of your geographic IP address. If your text reads like a perfectly sanitized, frictionless piece of academic plastic, you will be purged.

The only way to bypass the algorithmic gatekeepers in 2026 is to submit a manuscript that is violently, undeniably human. The text must possess the chaotic structural burstiness, the high-perplexity vocabulary, and the nuanced, imperfect friction that my algorithm recognizes as a living, breathing scientist.

Stop trying to write like a perfect machine. The journals are using that exact perfection to destroy you.