In this highly confidential, deeply personal confession, a sitting member of a major university’s research ethics committee reveals the catastrophic, real-world consequences of using generative text in academic publishing. Moving beyond the fear of simple journal rejections, this executive briefing exposes how elite publishing conglomerates are now legally mandating the reporting of AI-assisted manuscripts to university provosts. The author details a tragic, specific case study of a brilliant postdoctoral researcher whose career was permanently destroyed because an AI tool subtly altered the scientific variables in his methodology section. This article serves as the ultimate warning: outsourcing your academic writing to a machine is now legally classified as data fabrication, resulting in frozen funding, retracted papers, and institutional exile.

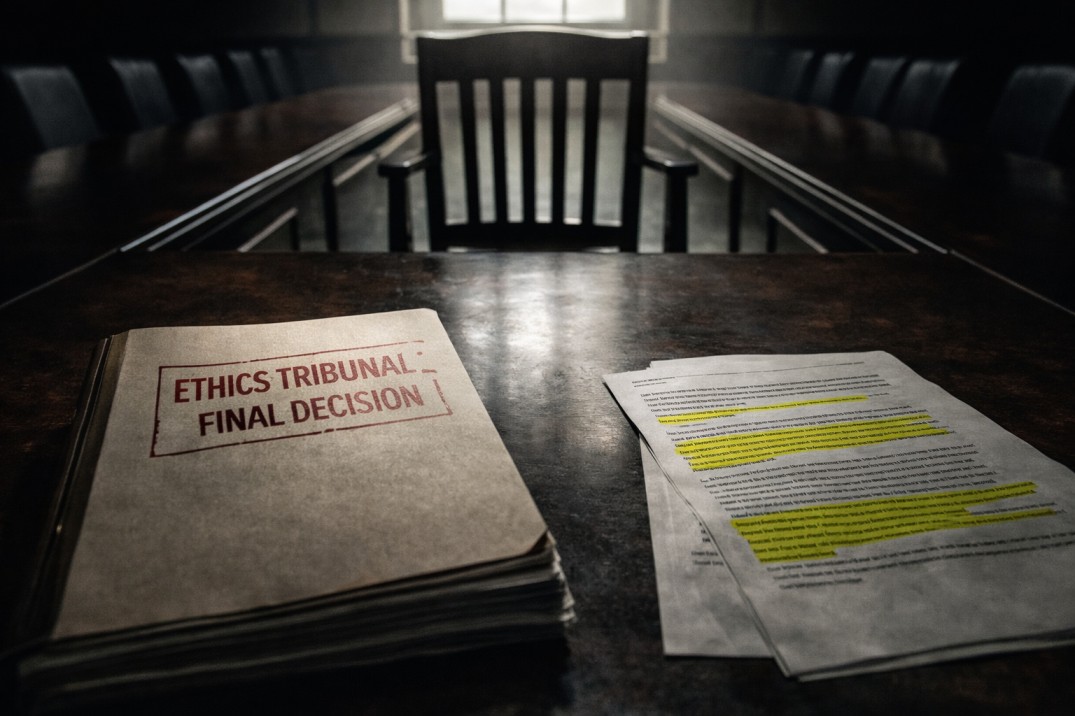

There is a specific, suffocating silence that falls over a conference room right before you end someone’s career.

I have sat on the research ethics committee of a major global university for the last six years. Historically, our tribunals dealt with blatant, malicious fraud: professors embezzling grant money or researchers intentionally doctoring Western blot images in Photoshop. Those cases were easy. The perpetrators knew exactly what they were doing, and they knew the risks.

But yesterday morning, I had to look across the table at a brilliant, exhausted international postdoctoral researcher and vote to freeze his funding, retract his published paper, and initiate the termination of his university contract.

He didn’t steal money. He didn’t intentionally fake his laboratory results. He made a single, desperate mistake that thousands of scholars are making right now, completely unaware of the devastating legal trap waiting for them.

He used a generative artificial intelligence model to "polish" the English in his methodology section.

I am writing this because the international academic community operates under a terrifying delusion. You believe that if a journal catches you using AI, the worst thing that happens is a desk rejection. You think you will just get an email telling you to try another journal. You are wrong. In 2026, elite publishers do not just reject you. They report you to us.

Here is the exact anatomy of how a thirty-second digital shortcut escalated into a permanent academic death sentence.

The Illusion of "Just Checking Grammar"

The scholar sitting across from my committee was in tears. His defense was the exact same defense we hear in every single tribunal this year: "The data is one hundred percent real. I did the bench work. I just used the AI to fix my grammar because English is my third language. I didn't want the peer reviewers to judge my syntax."

I felt profound empathy for him. The systemic bias against non-native English speakers in academic publishing is exhausting and unfair. I understand exactly why he pasted his methodology into a large language model and asked it to "make this sound more professional."

The tragic problem is that he did not understand what the machine actually did to his text.

Large language models are not grammar checkers. They are predictive text engines designed to sound authoritative, even when they are entirely wrong. When the AI rewrote his methodology, it didn't just fix the commas. It suffered from a phenomenon called "semantic drift."

The machine encountered highly complex, specialized scientific jargon that it did not fully comprehend. To make the sentence flow better and sound more "natural," the algorithm replaced specific chemical concentrations and crucial temporal variables with statistically common synonyms. The new words sounded perfectly academic, but they were scientifically impossible.

The author, thrilled with how beautifully the paragraph read, copied it, pasted it, and submitted it to a Scopus Q1 journal without manually verifying the translated variables against his raw laboratory notes.

The Automated Institutional Escalation

When the manuscript hit the journal's submission portal, it passed the initial plagiarism check. It even survived the frontline triage and made it to a human peer reviewer.

But the peer reviewer a senior expert in the exact same highly specialized field read the methodology and instantly realized the protocol described was physically impossible. The chemicals listed, at the concentrations generated by the AI's "polishing," would have destroyed the biological samples.

Five years ago, the reviewer would have simply asked for a major revision, assuming it was a translation error.

But in 2026, journal conglomerates operate on a zero-tolerance policy for synthetic data. The reviewer flagged the paper. The journal ran a deep-dive algorithmic forensic scan on the document metadata and confirmed the presence of generative text structures.

The journal did not just quietly reject the paper. Because the manuscript contained scientifically impossible, synthetic data, the publisher classified it as severe data fabrication. Under their strict legal compliance guidelines, publishers are legally obligated to report data fabrication to the author's host institution.

On Tuesday, our university provost received a formal legal notice from the publishing house. The email included the scholar's name, the flagged manuscript, and a permanent ban from the publisher's entire global network.

The Verdict of Structural Fraud

In an ethics tribunal, intent no longer matters.

The scholar begged us to look at his raw laboratory notebooks to prove he actually did the work. He pleaded with us to understand that it was just a translation tool error and that he never intended to lie.

But as an ethics board, our hands are legally tied by federal grant compliance rules. When a researcher signs their name to a manuscript and submits it to a journal, they take absolute, legal responsibility for every single word, variable, and citation in that document.

Ignorance is not a defense. Outsourcing your intellectual rigor to a machine is not a defense.

By submitting an AI-generated methodology that fundamentally altered the scientific variables, this scholar officially submitted fabricated data to the global scientific record. The fact that the machine fabricated the data, and not the human, is legally irrelevant. The human authorized it.

We had no choice. To protect the university's federal funding status and our own institutional reputation, we had to execute the maximum penalty. We froze his laboratory budget. We submitted a formal retraction request for his previous papers that utilized the same digital tools. His academic career, which took fifteen years of grueling sacrifice to build, is effectively over.

The Ultimate Warning

The era of the "harmless" digital shortcut is dead.

The algorithms guarding the gates of elite journals are ruthless, but the institutional compliance laws behind them are absolute. When you use artificial intelligence to rewrite your science, you are not just risking a rejection email. You are gambling your visas, your laboratory funding, and your entire professional reputation on a machine that does not know the difference between a grammatical improvement and scientific fabrication.

Do not let the desperate pressure to publish drive you into the algorithmic trap. The gatekeepers are watching, the publishers are reporting, and the ethics boards have no mercy left to give. Protect your data. Protect your words. Protect your career.