I have spent 35 years reviewing manuscripts from researchers across Asia, the Middle East, Latin America, and Eastern Europe. What I have witnessed is not a meritocracy. It is a system that rewards proximity to English-language academic culture far more than it rewards the actual quality of the science. In this article, I share what I have seen firsthand, what the data confirms, and why I believe the global research community is losing some of its most important scientific contributions to a bias that nobody in power wants to name out loud.

The Manuscript That Changed How I See This System In 2009, I was reviewing a manuscript submitted to a Q2 business journal. The paper came from a researcher based in South Korea. The methodology was rigorous. The dataset was original, drawn from a five-year longitudinal study of manufacturing firms across three provinces. The theoretical contribution was genuinely interesting and opened a line of inquiry I had not seen approached from that angle before. I recommended acceptance with minor revisions. The other reviewer recommended rejection. The primary reason stated in the review was, and I am recalling this as precisely as I can after all these years: "The writing lacks the clarity expected of scholarly communication." The editor rejected the paper. I asked to see the other reviewer's full report. What I found was not a critique of argumentation, methodology, or contribution. It was a critique of sentence construction, word choice, and what the reviewer described as "unnatural phrasing." In other words, the paper was rejected because the author did not write like a native English speaker. The science was sound. The English was imperfect. The paper was gone. I have thought about that manuscript many times over the past sixteen years. I think about it especially when people tell me that academic publishing is a pure meritocracy. It is not. And I think it is time we stopped pretending otherwise.

What We Actually Mean When We Say "Writing Quality" One of the most common reasons cited for desk rejection and peer review rejection in indexed journals is poor writing quality. On the surface, this sounds entirely reasonable. Academic communication requires clarity. Journals have standards. Nobody disputes this. But here is what I have observed across 35 years and thousands of manuscript evaluations: the phrase "poor writing quality" is doing two very different jobs in the peer review system, and we only ever talk about one of them. The first job is legitimate. It flags manuscripts where the argument is genuinely unclear, where the logic is difficult to follow, where imprecise language obscures meaning in ways that affect the reader's ability to evaluate the science. This is a real problem and it is worth addressing. The second job is something else entirely. It flags manuscripts written by researchers whose first language is not English, where the grammar is technically correct, the argument is clear, but the sentence rhythm, word selection, and stylistic choices feel unfamiliar to reviewers trained in American or British academic writing conventions. These two situations are being treated as identical inside the peer review process. They are not identical. One is a communication problem. The other is a cultural familiarity problem. And conflating them has consequences that ripple across the entire global research community.

The Numbers Behind What I Am Describing I want to be clear that what I am describing is not anecdote. It is a documented, measurable pattern that the publishing industry has largely chosen to manage quietly rather than address systemically. A study examining publication rates across nations found that researchers from non-English-speaking countries are significantly more likely to receive reviewer comments citing language issues, even when the manuscripts have been professionally edited before submission. The rejection rate differential between researchers from North America and Western Europe versus researchers from Southeast Asia, the Middle East, and Latin America is substantial across most indexed journal categories. Research published in journals focused on science and technology studies has shown that when the same manuscript is submitted with an institutional affiliation from a high-income English-speaking country versus a low-income non-English-speaking country, reviewer scores differ in ways that cannot be explained by content alone. The most telling data point I have encountered came from a journal editor I worked with closely for several years. He told me, privately, that when his journal moved to fully anonymised submissions where institutional affiliations were hidden, the acceptance rate for manuscripts from Asian researchers increased noticeably in the first two years. Nothing changed about the manuscripts. What changed was the information the reviewers had access to. That is not a meritocracy. That is a visibility bias operating under the cover of quality standards.

What Researchers From Non-English Countries Are Actually Up Against Let me make this concrete, because I think the abstract description of "language bias" understates the lived reality of researchers navigating this system from outside the English-speaking academic world. A researcher at a university in Egypt, Vietnam, Brazil, or Kazakhstan is typically working in an environment where the following are all simultaneously true. Their primary teaching, thinking, and daily communication happens in a language other than English. Their institution may have limited access to the most current indexed journals, which means their literature review is already working with constraints. The academic writing conventions they learned during their training may differ from the conventions dominant in the journals they are targeting. The professional editing support available to them is often expensive relative to their institutional budget. And when they receive a rejection citing "language concerns," they frequently have no way of knowing whether the concern is about their argument or about their accent on the page. Now compare this to a researcher at a university in the United Kingdom or the United States. They write in the same language the journal operates in. They have full access to the literature. Their writing conventions match the reviewers' expectations by default. Their institution often provides editorial support. And when they are rejected, the feedback they receive is more likely to engage with the substance of their work. These two researchers are competing in the same system. They are not competing on the same terms.

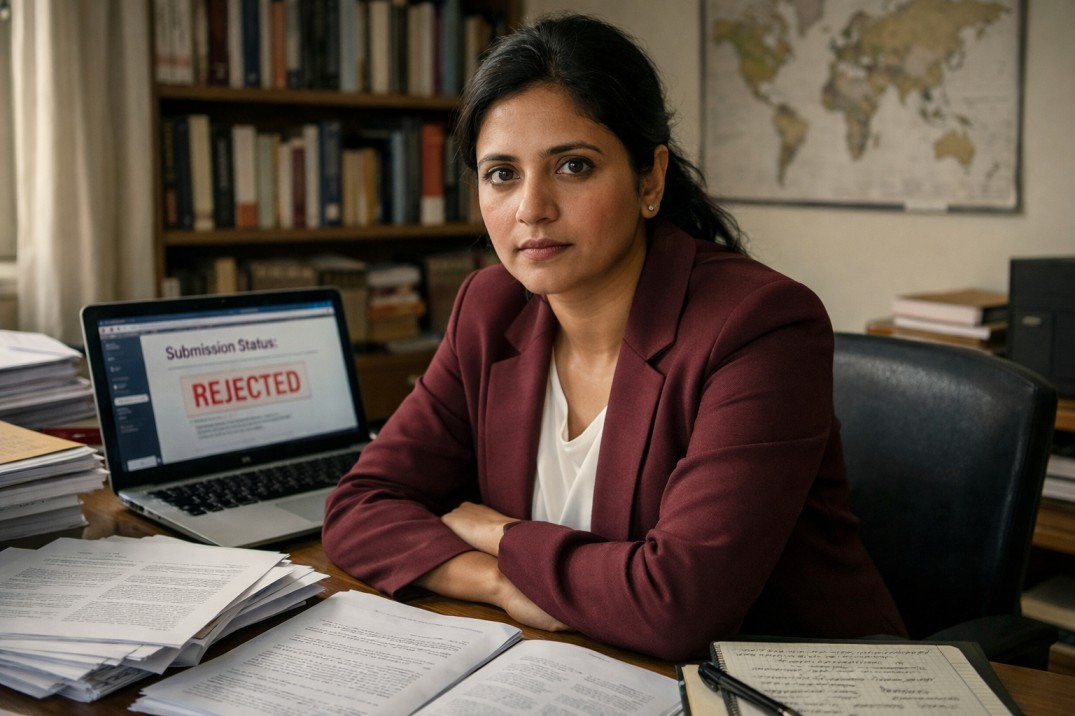

The Cost to Science That Nobody Calculates This is the part of the conversation that I believe is most urgently overlooked. When we talk about language bias in publishing, we tend to frame it as a fairness issue. And it is a fairness issue. But it is also something else. It is a scientific loss. The researchers being filtered out of indexed publication are not weaker scientists. Many of them are working on questions that are deeply important precisely because they emerge from contexts that Western academic institutions are not investigating. A researcher studying water resource management in the Mekong Delta has access to data, observations, and local knowledge that no researcher in Cambridge or Chicago can replicate. A researcher studying informal economic systems in Lagos is generating insights that global development economics needs. A researcher studying traditional medicine systems in rural India is working at an intersection of culture, biology, and public health that indexed journals claim to value. If those researchers cannot publish because their writing sounds different, the knowledge disappears. It does not get produced somewhere else by someone with better English. It simply does not enter the scientific record. We talk a great deal in academic publishing circles about the importance of diverse perspectives and global research representation. We do not talk nearly enough about the structural features of our own system that make those things systematically impossible at scale.

What I Have Seen Work and What I Know Does Not In 35 years, I have watched several approaches attempted and I have a clear view of what actually moves the needle. Language editing requirements placed on authors do not solve the problem. They shift the financial burden onto the researchers who already face the greatest resource disadvantage. Telling a researcher from a developing country to hire a professional English editor before submission is not a solution. It is a cost that compounds the existing inequality. Desk rejection based on language concerns, applied before any substantive review, is among the most damaging practices in the current system. It removes any possibility of scientific merit being evaluated and concentrates gatekeeping power in the hands of whoever reads the abstract first. This practice should end. What I have seen work is structured reviewer training that explicitly distinguishes between argument clarity and stylistic familiarity. When reviewers are trained to ask "do I understand what this researcher is arguing" rather than "does this sound like the papers I usually read," the outcomes for international submissions improve. What I have seen work is post-acceptance language support funded by journals rather than authors. Several open-access publishers have moved in this direction. It is not expensive relative to the overall cost of publishing. It is a choice about where to place the financial responsibility. What I have seen work is double-blind review with full institutional anonymisation. Not just hiding the author's name, but hiding the university, the country, and any other contextual information that allows reviewers to make assumptions about the author before engaging with the content. This is technically straightforward. The resistance to it is institutional, not logistical.

A Direct Message to Every International Researcher Reading This I want to speak to you directly for a moment, and I want to be honest with you in a way that I think the system rarely is. The rejection you received may not have been about your science. It may have been about a system that was not built with your success in mind and has not been meaningfully redesigned since. That does not mean you cannot succeed in this system. Many international researchers do, every day, and their published work represents some of the most important contributions in their fields. But it means you need to understand the system as it actually operates, not as it claims to operate. Target journals that have demonstrated genuine international authorship in their published record. Look at the last three years of publications and count the institutional affiliations. If 90 percent of published authors are from five English-speaking countries, that journal's commitment to global scholarship is a statement in a policy document, not a reality in their editorial decisions. Invest in your abstract. The abstract is the first thing an editor reads and in a system with high desk rejection rates, it is often the only thing that determines whether your paper enters review at all. Write it last, when your argument is completely clear to you, and have someone who was not involved in the research read it and tell you what they think the paper is arguing. Find collaborators in institutions that have publishing track records with your target journals. This is an imperfect solution to a systemic problem, but it is real and it works. Co-authorship with someone from a well-known institution in your target journal's primary geography changes how your submission is perceived at the desk review stage. And do not stop. The researchers who persisted, who understood the system clearly and worked it strategically, are the ones whose names I now see in the journals that once filtered them out.

What the Publishing Industry Owes This Conversation I have had versions of this conversation privately with editors, publishing directors, and journal board members for more than a decade. The response is almost always sympathetic in private and cautious in public. Nobody wants to be on record saying that their journal discriminates. Nobody wants to audit their own reviewer pool and publication data and confront what they find. Nobody wants to redesign processes that are deeply embedded in institutional habit. But science is a global enterprise in a way it has never been before. The problems facing humanity, from climate change to antimicrobial resistance to food security to urban inequality, are not concentrated in English-speaking countries. The data, the field observations, the contextual knowledge required to address those problems are distributed across the entire world. A publishing system that structurally advantages one linguistic and cultural tradition is not fit to serve that mission. I am 35 years into this work. I have watched this industry resist change with remarkable consistency. But I have also watched open access transform a model that the establishment said was untouchable. I have watched preprint culture shift the timeline of scientific communication in ways that journals are still adjusting to. Change in academic publishing is slow. It is not impossible. The researchers being filtered out today are not going to wait indefinitely for the system to become fair. They are finding ways around it, through preprints, through regional journals, through alternative credentialing. The indexed journal system can either evolve to include them genuinely, or it can watch its own relevance erode as the knowledge it refused to publish finds other channels. That is not a threat. That is an observation from someone who has watched this industry long enough to know how these cycles tend to end.

Dr. Marcus Eldridge has served as a manuscript reviewer for over 40 indexed journals, worked as associate editor for three Q1 publications, and has supported more than 2,000 researchers through the academic publication process over a 35-year career. He currently serves as Senior Research Advisor at Eldenhall Research.