For years, the academic publishing conversation has treated predatory journals as the primary villain in the integrity crisis facing global research. I disagree. After 35 years working inside legitimate indexed institutions, I have come to believe that the metrics obsession inside credible universities and funding bodies causes more long-term damage to science than every predatory publisher operating today combined. Predatory journals are a symptom. The disease is how we define, measure, and reward research success and until we are willing to name that honestly, we will keep treating symptoms while the underlying condition worsens.

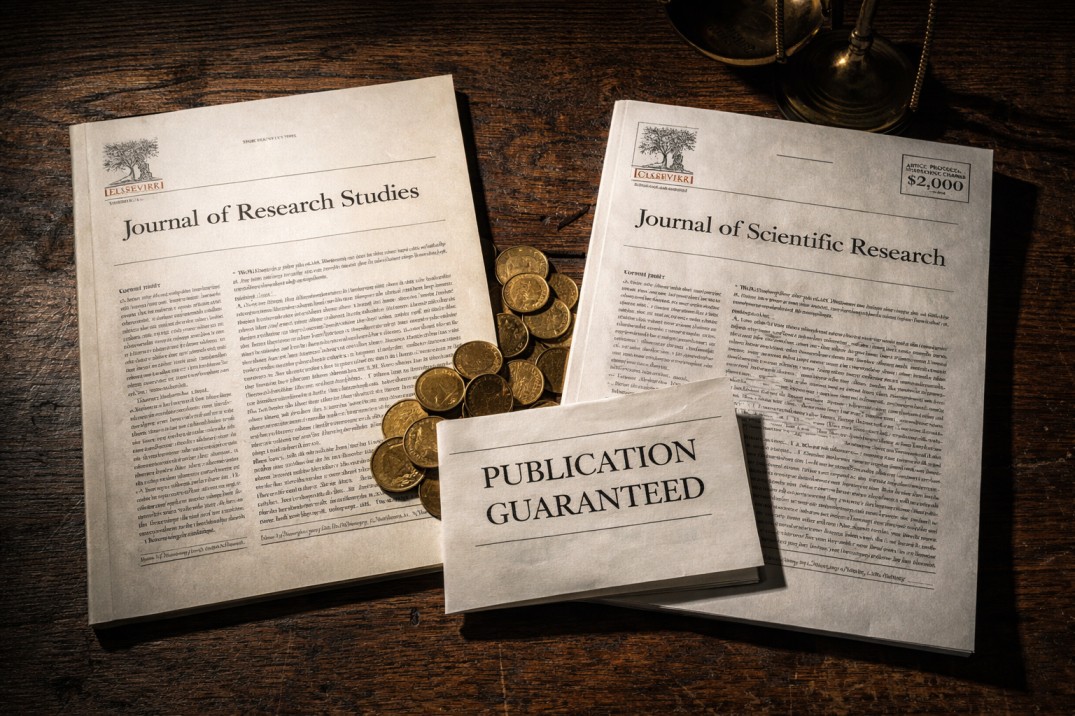

The Villain We All Agreed On There is a particular kind of comfort in having a clearly identified enemy. In academic publishing, predatory journals have served that function remarkably well for the past fifteen years. They are easy to describe. They charge authors fees in exchange for publication with little or no genuine peer review. They mimic the appearance of legitimate journals, using names and layouts designed to suggest credibility they do not possess. They have flooded the indexed and semi-indexed literature with work that was never properly evaluated and should never have been published. They target researchers under publication pressure, particularly those in developing academic systems who are desperate for indexed credentials. All of that is real. Predatory journals are a genuine problem and I have never argued otherwise. But here is what I have come to believe after watching this conversation unfold across more than a decade: the intensity of focus on predatory publishing is functioning, whether intentionally or not, as a distraction from a more systemic and more damaging problem operating inside the institutions we consider legitimate. The predatory journal preys on the desperation that the legitimate system creates. Address the legitimate system's dysfunction and predatory publishing becomes significantly less attractive. Continue ignoring the legitimate system's dysfunction while loudly condemning predatory publishers, and you solve nothing while feeling productive. That is where we are today.

What Created the Market for Predatory Publishing Predatory journals did not emerge from nowhere. They emerged from a specific institutional environment that created both the supply and the demand for what they offer. The demand side is straightforward. Across the past two decades, universities and research institutions worldwide adopted publication count and citation metrics as primary criteria for hiring, tenure, promotion, and funding. Researchers who do not publish in indexed journals at sufficient frequency face real career consequences. In many institutional environments, particularly in Asia, the Middle East, and parts of Eastern Europe and Latin America, publication in indexed or semi-indexed journals is not a career advantage. It is a survival requirement. When you create a system in which researchers face serious professional consequences for not publishing frequently enough, and when the legitimate publication system is slow, competitive, and structurally difficult to access for researchers outside the English-speaking academic mainstream, you have created the exact conditions that predatory publishing exploits. The predatory publisher did not manufacture the desperation. The legitimate system did. The predatory publisher simply found a way to profit from it. This means that every institutional policy that increases publication pressure without improving access to legitimate publishing is, functionally, a recruitment mechanism for predatory journals. And those policies are being enacted and maintained by the very institutions that loudly condemn predatory publishing in their official statements.

The Metrics Obsession and What It Actually Produces Let me describe what I have observed the metrics obsession produce inside legitimate institutions, because I think the costs are significantly underappreciated. When publication count becomes a primary career metric, researchers face rational incentives to produce more papers rather than better papers. This is not a character failure. It is a predictable response to an institutional incentive structure. If your promotion depends on publishing twelve papers in five years, you do not write fewer, deeper papers. You divide your research into the smallest publishable units, write each unit as quickly as possible, and submit to the highest-ranked journal that will accept it within your timeline. This practice has a name in research methodology circles. It is called salami slicing. It is widely known. It is universally frowned upon in official institutional statements. It is functionally encouraged by the incentive systems those same institutions maintain. The result is a literature that is dense with incremental, narrowly scoped studies that individually add very little to knowledge but collectively consume enormous amounts of journal space, reviewer time, and reading attention. The important work, the foundational studies, the theoretical contributions that require years of careful development, gets produced less frequently because the institutional system does not reward the time investment required to produce it. When citation count becomes a primary metric, the incentive shifts toward publishing in ways that generate citations rather than in ways that advance knowledge. Researchers learn to frame their work in relation to heavily cited papers, to cite strategically, to position their contributions as extensions of already-recognized work rather than challenges to it. The literature becomes self-referential in ways that slow the entry of genuinely new ideas. I want to be careful here to distinguish between individual researchers making rational choices within a broken system, and a broken system producing bad outcomes. The researchers navigating these incentives are not the problem. They are responding sensibly to the environment they are operating in. The problem is the environment.

What Legitimate Institutions Do That Predatory Journals Cannot Here is the part of this argument that I know makes people in institutional leadership uncomfortable, because it requires looking at behaviors inside legitimate, reputable, indexed journals and comparing them unfavorably to what we associate with predatory publishing. Predatory journals promise publication in exchange for payment with no genuine peer review. This is their defining characteristic and their primary offense against scientific integrity. Now consider the following practices that occur, to varying degrees, within legitimate indexed publishing. Special issues in legitimate journals sometimes operate with significantly accelerated review timelines and reduced scrutiny compared to standard submissions. In some cases, guest editors for special issues have personal or professional relationships with contributing authors. The peer review in these contexts is genuine in that it occurs, but the rigor is not always equivalent to standard submission review. Journals owned by large commercial publishing houses face financial pressures that influence editorial decisions in ways that are rarely made transparent. A journal that generates significant subscription and article processing charge revenue has institutional interests that are not identical to the interests of scientific quality. Those interests do not always conflict. But they are not always aligned either. Some legitimate journals have published work that later turned out to be seriously flawed, manipulated, or in a small number of documented cases fabricated, and the peer review process did not catch it. The Reproducibility Crisis is full of examples. Those papers appeared in journals with impact factors, editorial boards, and peer review processes. The legitimacy credentials were real. The quality assurance failed anyway. I am not saying that legitimate journals are equivalent to predatory journals. They are not and the distinction matters. I am saying that the line between predatory and legitimate is sometimes drawn by credentials and reputation rather than by actual quality control outcomes. And a conversation about research integrity that focuses entirely on predatory journals while ignoring quality failures in legitimate publishing is not a complete conversation.

The Researchers Caught in the Middle The people who pay the highest price for this dysfunction are not the researchers at well-resourced institutions with strong mentorship, institutional support, and clear pathways to legitimate publication. Those researchers navigate the system with difficulty, but they navigate it. The researchers who pay the highest price are the ones the legitimate system has not made room for. A researcher at a university in a country where English-language academic writing is not taught systematically, where the institutional library budget does not cover access to the journals they need to read in order to publish, where the tenure committee requires five indexed publications in three years but provides no editorial support, and where the gap between current research quality and Q1 journal expectations is real and significant, that researcher faces a choice the system has designed badly. They can spend years slowly building toward legitimate publication while their career stagnates. They can find collaborators at internationally recognized institutions and effectively subordinate their intellectual contribution to someone else's institutional credibility. Or they can pay a predatory journal and survive the immediate institutional pressure. The predatory journal offers a transaction. The legitimate system, in many cases, offers an aspiration with no practical pathway. When we condemn researchers who publish in predatory journals without examining the institutional environment that made predatory publishing the most available option, we are blaming individuals for a systemic failure. That is not analysis. That is the system protecting itself from examination.

The Specific Changes That Would Actually Matter I want to move from critique to specificity here, because I think the conversation about research integrity too often stays at the level of principle without descending to the level of institutional practice where change actually happens or does not happen. The single most effective change that legitimate institutions could make to reduce the predatory publishing problem is to stop using raw publication count as a primary career metric. Replace it with a smaller number of evaluated contributions assessed by genuine expert review. This reduces the publication pressure that makes predatory journals attractive. It also produces a literature that is less cluttered with incremental work and more representative of genuine scientific contribution. The resistance to this change is not intellectual. Every serious person in this conversation knows that counting papers is a poor proxy for evaluating science. The resistance is administrative. Evaluating quality is harder than counting numbers and institutions are consistently reluctant to do harder things when easier things are available. The second most effective change is to invest institutional resources in supporting researchers who face legitimate access barriers to quality publication. This means editorial support, language support, mentorship from experienced publication navigators, and honest guidance about journal selection and manuscript preparation. This support exists at some well-resourced institutions and is essentially absent at others. Making it a standard institutional responsibility rather than an individual privilege would change the calculation that drives researchers toward predatory options. Third, the indexed journal system needs transparent audit mechanisms that do not currently exist at sufficient scale. When a journal's editorial practices diverge significantly from its stated quality commitments, there should be a formal process for identifying that divergence and acting on it. The current system relies almost entirely on reputation, which is slow to update, easily gamed, and systematically biased toward journals with long institutional histories.

What This Means for the Broader Mission of Science I have been in academic publishing for 35 years because I believe in what science is supposed to accomplish. I believe in knowledge that is reliable, reproducible, and built by the honest accumulation of evidence over time. I believe in a global scientific community that includes the best researchers regardless of where they were born, what language they learned first, and what institutional address appears on their correspondence. The predatory publishing problem is real. But it is downstream of a more fundamental problem, which is that the legitimate system has allowed metrics to replace judgment, speed to replace rigor, and institutional self-interest to compete with scientific integrity. Predatory publishers did not create those conditions. They recognized and exploited them. The question the academic publishing community needs to ask is not only how to eliminate predatory journals. That question is important but insufficient. The more important question is what about the legitimate system created the conditions that made predatory publishing a rational choice for so many researchers, and what are we willing to change to address that. Until institutions are willing to ask and honestly answer that question, predatory publishing will continue to thrive. Not because researchers lack integrity. Because the system they are operating in has built integrity out of reach for too many of them. That is not a comfortable conclusion. It was not comfortable to write. But it is what 35 years of watching this system operate has taught me.

Dr. Marcus Eldridge has served as a manuscript reviewer for over 40 indexed journals, worked as associate editor for three Q1 publications, and has supported more than 2,000 researchers through the academic publication process over a 35-year career. He currently serves as Senior Research Advisor at Eldenhall Research.